Today, AI based on neural networks is at a very interesting stage of its development. It has clearly taken off: we see numerous applications from reading CT scans to picking fruits. However, adoption rates vary a lot. Recommendation engines, customer support bots, and other stuff that's been called ”internet AI” are fairly widespread, we see it everywhere in our lives; most areas, including software testing, aren’t quite there yet though.

What makes AI widely adopted, and what’s tripping it up? Let’s take a look at our field, software testing. Specifically, I want to see how well neural networks can generate automated tests and what barriers exist to mass adoption of AI in our field.

I’ll be looking first and foremost at the problems and difficulties, not because I’m trying to be glass-half-empty but because things that go wrong tell us more about how a system works than things that go right.

Once we’re done generating tests, I want to look at AI's applications in other fields, like medicine - there’s a lot of work being done there, especially with reading scans. Maybe they have similar growing pains?

Finally, for a bird’s-eye-view, I’ll look at a completely different industry that has matured and solidified. What can its experience tell us about the evolution of AI today?

With this perspective, perhaps we can stop the hype/disillusionment pendulum that plagues new technologies, and maybe get a glimpse of where AI is going.

Acknowledgments

Before we go on, I'd like to thank Natalia Poliakova and Artem Eroshenko for the time and expertise that they've lent me with this article. However, the responsibility for everything written lies squarely with me.

Also, a big thank you to Danil Churakov for the awesome pictures in this (and other) posts!

Generating tests: where it’s at now

Today, ChatGPT, GitHub Copilot, and other AI-driven tools are being used quite widely to write code and tests, and we’ve recently had a very interesting interview about it.

However, people are still mostly writing automated tests by hand, and the usage of neural networks is mostly situational. The rate at which the networks hit the mark in this field is still way below the human norm.

A late 2020 paper achieved predicting 62% of assertion statements in tests, and that was definitely a brag-worthy result. According to the GitHub Copilot’s FAQ, its suggestions are accepted 26% of the time. People often say its best use is as a quicker alternative to Google and StackOverflow. So - situational usage.

For now, AI in software QA can’t boast the same level as in e. g. marketing, where digital marketing and data analysis strategies created by neural networks are actually superior to those created by humans.

Where do these issues stem from? Let’s ask around and do some hands-on research. I’ve talked to my experienced tester colleagues and also tinkered with Machinet - an extremely popular plugin for IDEA that generates unit tests.

The neural networks we’ve worked with are very useful for their intended applications. Machinet was likely created as a developer assistant rather than an all-purpose tool. Their motto definitely sounds like it’s aimed at devs rather than QA: ”Let's face it. Unit testing is a chore. Just automate it”.

That makes sense - the devs usually write the unit tests. And for a person without deep knowledge of testing, the tool does an excellent job. The faults become visible only when we look at the results from a QA perspective. So, what issues were there?

The issues

Let’s get something out of the way: I’m not trying to shame a good piece of tech or nitpick. I’m mostly talking about problems because they tell us a lot about where technology could go. So, what can we say about the generated tests?

Excessive coverage

The Machinet plugin does its job thoroughly- it covers if-else branches, uses different data types, checks character limits, etc. As a matter of fact, it does the job too thoroughly and can generate too many tests with no regard for resource load.

For instance, checking that a field accepts any character and checking that it restricts string length to 255 characters can be easily done inside a single test - but Machinet generated a separate test for each case.

Another example involved a data retrieval function used by several API methods. The generated tests would cover the function in every one of the methods, and a separate test would be created for the function itself. Again, we’re getting excessive coverage.

The impression was that if we had several intersecting data sets, the plugin would try to cover all possible intersections.

Say you’re testing an e-commerce website, and you’ve got multiple options for delivery (customer pick-up, payment type, etc.). With Machinet, all possible combinations will probably be tested, whereas a human tester could use a decision table to cover the combinations that really matter. However, doing this would probably require contextual data not fed into the neural network.

Code repetition

A related issue is code repetition.

We were testing a collection that needed to be populated with objects, which had to be used in 4 different tests. Each test re-created the collection from scratch instead of moving it into a separate field or a function.

Moreover, a function for generating the collection already existed in the code, and it did essentially the same thing that was done in the tests, but the plugin didn’t use it.

How well could a neural network use something that local? It would be tempting to say - well, this is a unique situation that the network has never encountered before. But it’s not like a neural network would have any trouble recognizing a unique photo of a cat that it has ever seen before. That is an interesting problem that we’ll return to below.

Understanding complex code

The automated tests generated by neural networks are at their best when they cover simple, modular pieces of code. Of course, it would be awesome if all code was written that way, but that might not be possible or feasible.

And when you need to cover a long and complex piece of business logic, the reliability of machine-generated tests suffers a lot. Sure, they will be generated, but it will be difficult to figure out if they cover things properly or if it’s just random stuff that can barely be counted on for smoke testing.

Understanding context

I’ve touched upon this when speaking about excessive coverage: the network doesn’t know what the method is used for, and so can’t optimize based on that knowledge.

For instance, a human tester knows that if we’re testing an API method in Java, we should check for null values; this can be skipped if it isn’t an API. The networks we’ve seen don’t seem to make decisions like that.

Usage of external tools and patterns

We've also noticed that Machinet populated the collection with hard-coded values instead of using a library to generate data randomly. I don’t know if this is too much of an ask, but there is a related issue: the neural networks we’ve worked with don’t do well with external dependencies, and using patterns is also an issue.

Cleanup

Finally, the code generated by the networks needs to be thoroughly reviewed. Of course, all code written by humans needs to be reviewed, too; this is standard practice, and the creators of AI-based tools always warn about this. This has to be kept in mind when developing such tools: there will be a lot of back-and-forth, and making editing easy should be a prime consideration.

But there is another reason why this might be a problem. As I’ve said, Machinet seems to be aimed at developers who might not be too familiar with testing - which means the person who will review the machine's work likely won’t be able to correct all the flaws we've just talked about.

The reasons behind the issues

So, here are the problems:

- the tools we’ve been working with aren’t as good when deep knowledge of the local project is needed

- they don’t “understand” the context of the methods they are testing

- they aren’t good at optimizing code in terms of repetition or resource usage

- they work best on code that is simple and modular

- the code they create tends to be this way, too (granted, that might not be a problem)

At first glance, these might seem like fairly deep issues, but maybe this is because we’re talking about “understanding” and not about data.

Unsurprisingly, the network can’t optimize code based on resource consumption if it isn’t trained on data for RAM and CPU usage. ChatGPT does not know your particular project because it has not been trained on it.

In the end, these hurdles aren’t intrinsic to code. It’s just a question of economics: whether or not it’s feasible to train a network on a particular data set.

Is the deeper reason code itself?

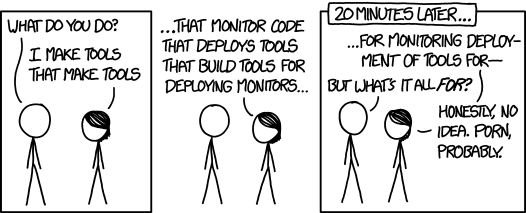

However, there is a deeper issue here: code is different from human language. That problem has been well-researched, and it’s been tripping up algorithms for some time now.

If a natural language like English is a tool that you use mostly “as is”, then a programming language is a tool that you use to build tools to build tools to build whatever. It’s much more nested.

Every one of these tools - new functions, variables, and modules - gets its own name. That has consequences.

For one, these names can get long and unintelligible - Java, in particular, is notorious for VariableNamesThatLookAndFeelLikeTrainWrecks.

More importantly, you get a hell of a lot of new names. For instance, the source code for Ubuntu can have two orders of magnitude more unique “words” (well, tokens) than a large corpus of texts in English. That is a very large and very sparse vocabulary; both are bad news for any algorithm - rare words mean there is less data to train on, and vocabulary size affects the speed and memory requirements of the algorithms.

These nested vocabularies are also very localized. Most words that you use inside your project will never see the outside. That’s the whole point of encapsulation: the less things stick out, the easier the code is to use.

As a result, you’ve got application- and even developer-specific vocabularies. In addition, the tooling people use also changes constantly, and that breeds new vocabulary, too.

Aside from all that, there is also style: our code can be pretty opinionated. Was that thing you just wrote Pythonic? Or are you still dragging around your old Java habits? The same problem can be solved in different ways, creating further confusion.

So, imagine your tech stack. You’ve got your language (maybe several languages), your framework, your database, all the libraries you have at the front and back end, the patterns you use, and the quirks of the developers. These intersecting contexts severely limit the amount of similar code that an AI-driven tool can learn from. It’s no wonder, then, that such tools have trouble generating complex code.

The issue is deep but not deep enough

Sure, this is a problem, but it’s far from insurmountable. The thing is, within the context defined by language, framework, project, etc., code is actually much more repetitive than human language (also, see this). And that repetitive part is the dominating one. One study found that a large collection of JavaScript files contained 2.4 million unique identifiers, but only 10k of those were responsible for 90% of all occurrences.

So, it’s not some deep issue with the data (our code); it’s a problem of how the data is organized and fed into a tool.

Maybe it can be solved by using an algorithm that trains on sub-words, not words. Such an algorithm wouldn’t have to deal with million-word-long vocabularies, but it would be more resource-taxing.

One way or another, the answer is always about how we organize the data and how quickly it can be processed.

Zoom out: evolution of AI

Let’s try to put those issues into perspective and see how they compare to difficulties that AI has had to overcome in other fields.

The history of neural networks has revolved around processing more data in less time. I didn’t quite get this at first: with neural networks, quantity is quality; the more data, the more complex and accurate the answers are.

People have been trying to build neural networks almost as long as there have been computers, but for most of that time, AI was dominated by a different school, the “rule-based” approach: tell your system the rules and watch it work; if it fails - ask an expert, make new rules. In contrast, neural networks figure out the rules themselves based on the data they process.

Why did the networks only “take off” in the past decade or two? Because that’s when we got large-scale data storage (cloud in particular), insane volumes of generated data (through IoT, among other things), and huge processing power (esp. GPU computing). Also, several innovations happened that greatly improved the efficiency of training deep neural networks, from Geoffrey Hinton’s work in the mid-2000s to the invention of transformers in 2018. Long story short, it became possible to process lots of data quickly.

Problems encountered in other fields

But just having the algorithms and hardware might not be enough. Healthcare is an area that gathers and stores large volumes of data, is always looking for cutting-edge tech, and attracts plenty of investment. So it’s no surprise that in some places and certain fields (like ophthalmology), AI has already found its way into daily usage.

However, there is still a long way to go before widespread adoption. In healthcare, unlike programming, there do exist models that outperform humans, but they mostly remain at the stage of development and keep failing in implementation.

The reason is that real-life data is "dirty" compared to the sets used to train the models:

- Data in hospitals is all gathered and stored in different ways. A hospital usually has several systems that don't talk to each other other than through pdf-files.

- The data infrastructure differs from hospital to hospital, so you can't build large-scale datasets.

- The patients can be of different demographics than those used to train the models.

- Some signals that practitioners get are not digitized, which lowers the comparative efficiency of models.

- There are patient confidentiality issues when training models on local data sets.

Getting into the narrow spaces - or widening the spaces

Generally speaking, there are two ways around these obstacles: make the models fit the narrow spaces that real-life usage presents us with - or widen the spaces.

The large datasets used to train the top-performing models in healthcare are created through steps that can't be reproduced in real life for proprietary reasons. So models could be calibrated on local environments to improve their accuracy, while synthetic datasets can be used to preserve confidentiality.

But none of this will likely be very effective without changing the data collection infrastructure. The systems in place must interface with each other, data should be stored off-site, as proposed in the STRIDES initiative, and most importantly, it has to become more uniform.

Back to code

The reasons behind the problems of adoption in code and healthcare are, unsurprisingly, very different. But weirdly, the solutions are less so. We can expect the models to become cheaper and more effective at being re-trained to fit local contexts.

And we can change the way we organize the data. To make the networks working with code and tests more efficient, we need more standardized writing and storing methods.

Many practices for what we consider ‘clean code’ aim to make code more readable for humans. Maybe we need ways to make it more readable for neural networks.

Or maybe it’s even simpler. If the neural networks can generate simple, repetitive code and are good at covering it with tests, then perhaps we should let them do both sides of the job and forget about clean coding practices altogether, instead concentrating on ways of controlling their output.

Summary

When a new technology comes along, there are two paths for adoption: first, the tech tries to fit some existing roles in the economy; then, the economy changes to accommodate the new tech full-scale.

The second path means a web of infrastructure is created to support the tech. If you’ve got cars, you’re also getting mass construction of new roads, changes to legislation, etc.

Those are the two paths open before AI in QA right now that can help marry the tech to the infrastructure needed to support it:

making AI-driven tools that are better able to fit the local contexts where they will be applied, tools that can learn on a particular project and tech stack

accommodating the way tests are organized to the requirements of neural networks.